If you have managed a cloud data platform, you have undoubtedly gotten that call. You know the one, it's usually from finance or the office of the CFO, inquiring about your monthly spend. And it usually comes in one of two forms:

This usage trend is on track to exceed our annual budget and contract. WTF?

What the hell is going on with usage? It shot up by 30% last month!

While both are clear and present dangers to cloud data platform owners, they don’t have to be. In this post, I’ll share four essential components of a cost control capability for your cloud data platform (CDP.) I’ll provide some examples using Snowflake, but the features and principles will also apply to other CDPs.

Financial management (FinOps) for cloud infrastructure is one of the most pressing issues for organizations today. With Gartner now predicting cloud data consumption will rise to 75% of all cloud data, up from less than 10% in 2016, it’s not surprising to see new products like CapitalOne SlingShot or CloudZero, which help organizations manage the cost of the Snowflake data platform. These principles will apply whether you use a 3rd party tool, the CDP’s tools, or build custom solutions.

Visibility

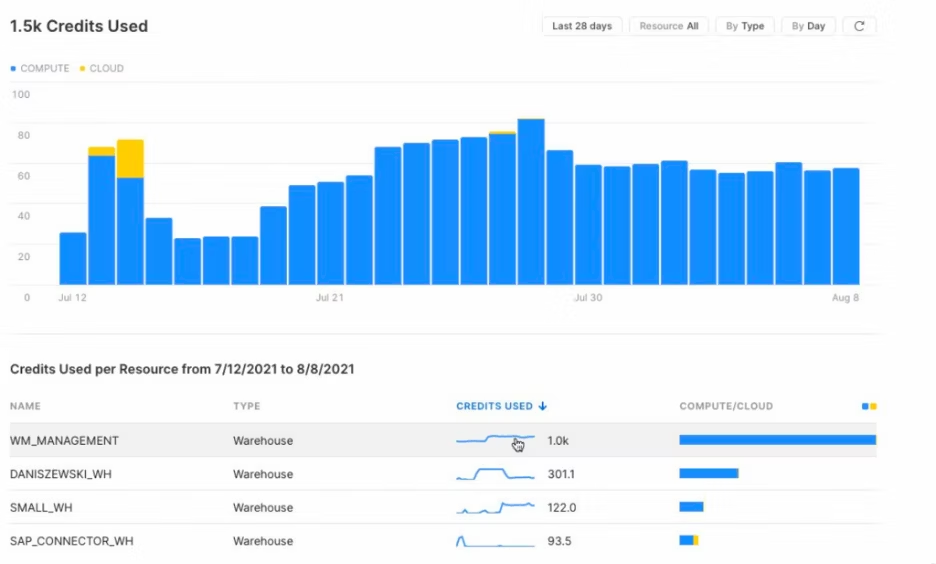

We’ve all heard the saying, “if you can’t measure it, you can’t manage it.” It’s true for controlling cost and is the starting point for developing our cost control capabilities. With calls for more visibility into usage and cost, cloud data platform providers realize that more observability is good for both customers and vendors. Many have begun providing dashboards and user interfaces for increased visibility. Although having a single pane of glass that offers a view into real-time or near real-time usage metrics is critical, cost control capabilities will need to go deeper - because incurring a $20K overrun from a killer query now happens too easily.

An essential part of surfacing usage insights is tagging your resources with additional metadata. Cloud data platforms have rich metadata capabilities that vendors make available to customers. Attaching metadata or tags to the granular usage data will provide valuable insights into usage, performance, and cost. You can now better understand the usage and cost associated with a resource and align it with the line of business to understand the value. Be sure to apply metadata to all cost-incurring resources such as compute, tasks or storage. For example, Snowflake supports query tagging at the user and session level. Every query execution will log the metadata in the query history.

ALTER USER sonnyrivera SET query_tag = ‘finops analysis’;

ALTER session SET query_tag = ‘finops analysis’;

SELECT query_id,

query_tag

FROM snowflake.account_usage.query_history

WHERE query_tag = ‘finops analysis’

It is worth noting that developing a quality taxonomy for tags will enable us to do much more than cost and usage controls. For example, platforms offer metadata-driven policies which dynamically apply privacy and access control policies.

The compute cost is often the most significant portion of the cloud data cost, so it makes sense to track it closely. Here again, attaching additional metadata to virtual warehouses (elastic compute) will provide further insights. Additionally, tools like dbt enable metadata tagging when developing data models.

There are a few other resources to tag and review besides the virtual warehouse. Take a hard look at the “serverless” platform features as well. Serverless features are great for ease of use but can lead to unseen and unmanaged costs. These fall into the categories:

Automated ingestion like Snowflake’s Snowpipe.

Background or scheduled tasks.

Automated re-indexing or re-clustering.

Here are a couple of tips:

First, tag every cost-incurring resource with additional metadata.

Ensure all virtual warehouses have auto-suspend and auto-restart configured.

Visualize usage trends. Even a simple forecast can save thousands of dollars.

Visualize and track the total number of virtual warehouses over time to reduce compute sprawl.

Measure the utilization of the warehouses — too high usually means high concurrency or undersized resources, while too low means wasted consumption.

Visualize the usage and costs for all environments (e.g., development, QA, production.)

Tag and monitor real-time streaming or change data capture (CDC) separately from other compute services (warehouse for analytics, data feeds, etc.)

Experiment and simplify.

Monitors and Controls

So, now that you have visualized your insights, let's follow that with actions. First, your cost controls capability needs proactive monitoring and automated controls.

Resources monitoring and alerting are critical components of cost controls, and these features vary by CDP. Therefore, review the CDP's monitoring and alerting capabilities to understand better which levers you can pull.

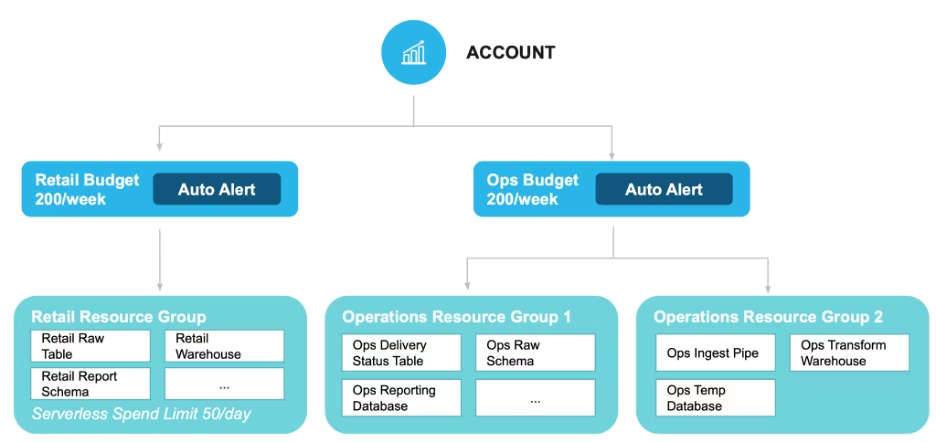

For an example, let’s examine Snowflake’s approach. Snowflake has resource monitors at the account and warehouse levels. Resource monitors track usage, compare them to quotas, and perform actions. It is worth noting that multiple resources can also be grouped into a single resource monitor. Snowflake’s resource monitors support three types of actions:

Notification only.

Notification and Suspend.

Notification and Suspend immediately.

Here are a few best practices for monitoring and alerting:

Create a resource monitor for each organizational account that provides only alerting.

Monitor all cost-incurring resources using quotas, notifications, and service suspension actions or rules.

Add metadata tags to all serverless features that fall outside of resource monitoring.

Use additional custom actions to alert and suspend services where needed.

Start Getting Better Insights

Optimization

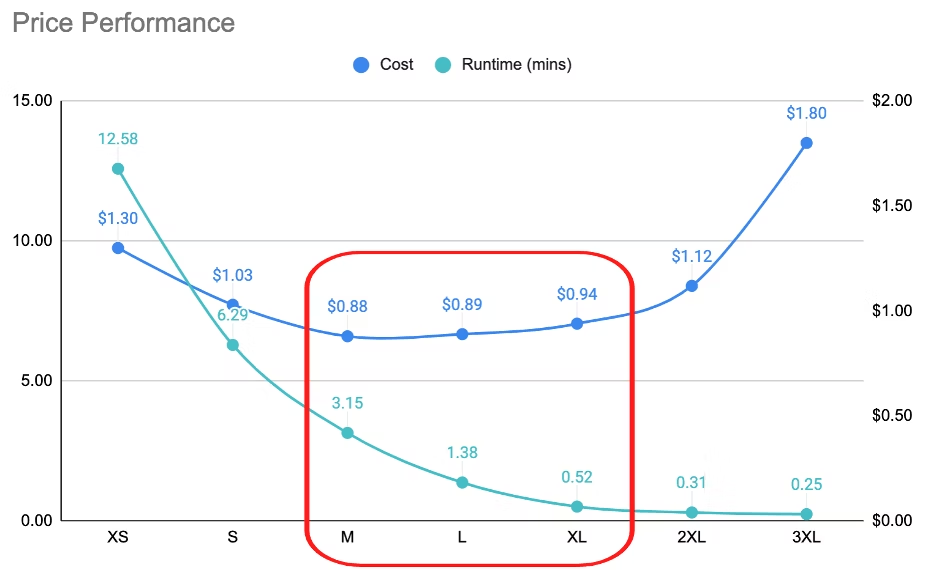

Price performance is the relationship between costs versus performance, and it’s not always easy to understand or interpret. So, when it comes to consumption-based pricing, price performance is another form of cost control. Look at the diagram below; why does x-small compute cost more than x-large while still having poorer performance? Why does going from 2x-large to 3x-large increase cost by over 60% and provide little performance gain? In the first case, it may be due to data spillage, effectively trashing, by the compute resources. In the second case, compute has been over-provisioned, so there are excess compute resources for the job.

Let’s face it; data solutions must embrace cloud-first or cloud-native approaches because the old methods used for on-premises solutions aren’t well suited for the cloud. Long-running queries, poorly designed models, and needlessly scheduled data pipelines are candidates for control cost and an opportunity to delight our stakeholders.

With some refactoring focused on performance optimization, you can save 15-20% on computing costs. Try some of these cloud cost optimization tips:

Watch out for data spillage, the equivalent of your computer thrashing or writing data to disk due to low memory. Cloud data platforms do this, too, and it usually indicates undersized compute.

Properly size your compute for the workload. Over-provisioning for small queries will not improve performance. Likewise, under-provisioning will not save money on large queries. Due to the “data spillage” issue listed above, it will usually cost more.

Ensure your analytics tools support “join optimization” (include only the minimum number of joins required).

Develop separate pipeline and replication schedules by the environment. There are massive savings by sizing each environment properly. For example, development and integration environments may need less compute resources, few and smaller data pipelines, and costly real-time replication may be reduced to daily or on-demand replication.

As always, simplify wherever you can.

Collaboration

Collaborate, collaborate, collaborate. Create a cost control team with stakeholders from architecture, engineering, analyst, and business departments. Having a diverse group dramatically increases the impact of any cost control initiative. The typical time commitment is 30 minutes weekly, even less once the process starts. This team sets priorities, leverages technical expertise, and ensures stakeholder buy-in.

Quick wins are crucial for the long-term success of the team. Know this; I like to create aggressive 30, 60, and 90-day goals for the team.

Pro tip: Don’t be afraid to make Big Hairy Audacious Goal or a BHAG, as management guru Jim Collins calls them. Often, these are easily achieved because there is so much low-hanging fruit. For example, in my last role as a data leader in financial services, we had a BHAG of 15% reduction compute credits in 30 days. A few months later, a peer called asking, “What did you do? We were using compute growth as a proxy measure for data ingestion progress & productivity?” Aside from highlighting the wrong metric for data ingestion progress, our early quick wins in cost controls were a huge success.

Leverage the momentum of those quick wins by creating longer-term goals for systemic cost improvements. CFOs and CDOs must become more symbiotic in the cloud data world, so having more cost transparency coupled with those quick wins will go a long way.

Where to go from here?

All of the above are important to any cost control capability you undertake, whether you use 3rd party tools, your CDP’s features, a custom set of services, or some combination. There is rarely a single solution to any problem. I recommend forming your team now, getting started ASAP (you’re only one unmonitored resource away from a fire drill!), and putting the right resources in place to surface, monitor, and optimize cloud cost and usage.

So next time you have a call with the CFO, she may just be saying, “Great job! Look how much money we saved this month”.

If you want to know more about the modern data stack, follow me on LinkedIn and Twitter for articles, posts, and live stream interviews.

ThoughtSpot provides powerful search and AI-driven self-service analytics tools that make it easy for you to find and create insights. Ready to get started? Start your free trial of ThoughtSpot today!